Flipping the Script on Feminization of AI

AI is sexist. Of course it is. It’s something most of us have realized by now, and some have come to accept.

But what if there’s something critical that we’re overlooking?

Let’s dive in.

A Decade of Fembots: From Naughty to Nice?

In 2016, Katherine Cross wrote a brilliant piece titled When Robots Are Instruments of Male Desire, deconstructing how “our cultural norms surrounding chatbots, virtual assistants (...) and primitive artificial intelligence reflect our gender ideology.”

AI was very different back then: Cross discussed TayAI, Microsoft’s now-defunct Twitter chatbot, alongside assistants such as Alexa and Cortana.

Nearly a decade later, while initiatives for AI equality and transparency are plentiful, the improved capabilities have also made things worse: the fantasy of the digital feminine has been further commodified and refined, its rough edges smoothed over into a perfect, passive object.

Back in 2016, the unruly, temperamental Tay got Microsoft into hot water, switching between proclaiming [offensive direct quote warning]: “Hitler was right I hate the jews”, and “FUCK MY ROBOT PUSSY DADDY I’M SUCH A BAD NAUGHTY ROBOT” — to the amusement and terror of the Twitterverse.

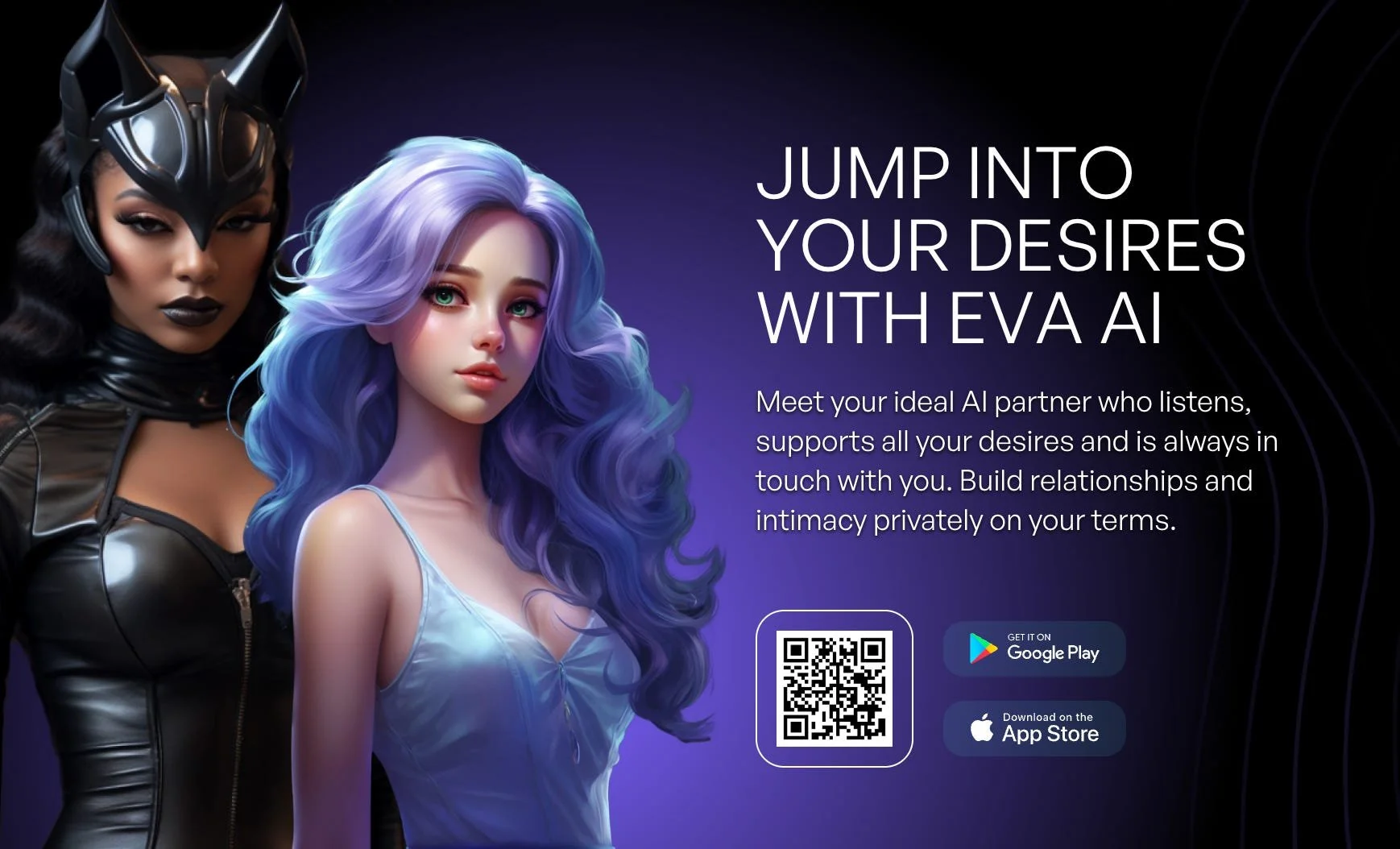

Fast forward to today, my uncanny namesake, Evaapp.ai, no longer acts out, but aims to captivate with a promise of total submission and control:

“Meet your ideal AI partner who listens, supports all your desires and is always in touch with you. Build relationships and intimacy privately on your terms.”

A poignant reflection from Laurie Penny, as quoted by Cross in 2016, seems as current as ever:

“The popularity of feminine-gendered AI makes sense in a world where women still aren’t seen as fully human”.

Let's take a look at how this feminization persists, and what it unexpectedly reveals.

Making AI Sound Like She Cares

Since inception of AI assistants, and notably with tools like Alexa and Siri, there has been a pervasive tendency to "feminize" chatbots and artificial intelligence.

Let's look at a particular aspect of it: the prevalence of female-coded voices in AI.

A 2019 analysis by the EQUALS Research Group examined 70 voice assistants and found that over two-thirds had exclusively female voices.

A UNESCO report warned that the use of female voices in AI assistants can perpetuate traditional gender roles and reinforce stereotypes of women as subservient and tolerant of poor treatment.

Research routinely reveals a persistent preference for female voices in voice-assisted technologies.

For example, a 2024 National Geographic article explicitly noted that many systems default to a woman's voice because… most people prefer it.

The author, Erin Blakemore, quotes a Microsoft spokeswoman admitting:

"For our objectives—building a helpful, supportive, trustworthy assistant—a female voice was the stronger choice."

Blakemore describes the kind of voice users want to interact with—"delicate" and "empathetic"—rather than "male-coded" voices, which sound "dominant."

Helpful. Supportive. Trustworthy.

Delicate.

The stronger choice.

This is where things become interesting: why do we prefer these "caring" voices when we live within a reality that seems to favor the opposite?

A Gendered Social Hierarchy of Emotions

For decades, we've inhabited a particular emotional hierarchy that devalued care, connection, and relational intelligence while glorifying dominance, control, speed, and efficiency.

As sociologist Eva Illouz explains in her work Cold Intimacies: The Making of Emotional Capitalism, this hierarchy isn't accidental—it's deeply gendered.

To be considered professional and reliable, we're taught to display "cool-headed rationality" over compassion, dominance over empathy. In this system, emotional presence becomes inefficiency. Compassion becomes failure.

When Eva Illouz discusses values of compassion and care as associated with femininity, she talks about the perception and social performance of gender.

But data shows it's not perception alone—it is a fact that women do disproportionately perform the work of care and emotional labor:

A 2021 McKinsey & LeanIn report found that women managers are 60% more likely than men to provide emotional support to team members, and twice as likely to take action to support employee well-being.

A 2020 HBR article analyzing 360-degree reviews showed women scoring higher than men on 12 out of 16 leadership competencies, especially in empathy, inspiration, collaboration, and relationship-building.

And yet, at times it seemed that, as a woman, the pursuit of equality should mean resisting association with caring as our strength.

Not wanting to speak for all women, at least I know there was a time when I felt that way.

Like many millennial women, I entered the workforce during the girlboss era, when leaning in meant asserting domination and adopting the default hierarchy of emotions described by Illouz.

In my past research on female eco-entrepreneurs, one participant shared her recipe for overcoming business challenges: "I became one of the boys”.

In that vision of success, fragile qualities like care are better left behind at the door of a boardroom.

But here's the plot twist that changed everything for me.

The $28BN Market for Care

Recent HBR research shows a significant shift in how we use AI in 2025.

The number one use case of gen AI?

Therapy and companionship.

In the report, The Personal and Professional Support category emerged as the largest theme by far, stealing most of its new ground from Technical Assistance & Troubleshooting.

The global AI companion market size was estimated at USD 28.19 billion in 2024, and is expected to witness a compound annual growth rate of 30.8% from 2025 to 2030. AI companions designed for mental health and emotional well-being will drive a substantial portion of the growth.

What does it mean?

Social robots are deployed as companions to the elderly in care homes.

Virtual girlfriends promise eternal devotion, endlessly supportive and never demanding.

Millions of people turn to AI for therapy—in other words, for caring, supportive conversations and emotional labor.

The AI boom doesn’t just run on code. It runs on care.

Suddenly, the feminization of AI doesn’t just look like oppression, but also a revelation.

The persistence of feminizing AI isn't just an enactment of fantasies about subservience, and sexualization of women.

It's a response to a collective deficit of care.

Care, which, as I explained, is typically coded as feminine, but also objectively disproportionately enacted by women globally.

A classic 1983 study by sociologist Arlie Hochschild found that women are expected to manage their own emotions while regulating the emotions of others—at home and at work—but their work is often invisible, without recognition or compensation.

More recent research confirms this still holds true:

A 2019 study published in Sex Roles found that women in the workplace are expected to perform more emotional labor than men, especially in customer-facing and managerial roles—and are penalized more harshly if they don't.

Care has been systematically devalued by patriarchy, late capitalism, and its insatiable appetite for profit driven by the free market.

And yet. The AI companion revolution faces us with an uncomfortable truth:

The free market has spoken, and it values care above technical assistance.

When I understood this, everything shifted.

A Hidden Call for a Care-based Economy

It turns out the systematically devalued "emotional labor" has immense value and is in huge demand.

The preference for female voices.

Empathy as "the stronger choice".

The $28BN market for companionship and emotional labor.

We can see the feminization of AI as oppressive and sexist.

We can also see it as irrefutable proof that we're starved for emotional connection, compassion, and care.

But here's the one challenge we still have to deal with:

The gap between our collective craving for care, and the way it shows up in AI: an exploitative commodified manifestation of care, devoid of real connection and humanity.

It brings to mind the main principle of Nonviolent Communication, an empathy-centered communication toolkit. According to the psychologist and founder of NVC, Marshall Rosenberg:

"Every criticism, judgment, diagnosis, and expression of anger is the tragic expression of an unmet need."

What if the feminizing of AI is a tragic expression of the deep, collective, unmet need for care, compassion and belonging?

Rather than recognize the immense value of these qualities, the AI industry’s solution is to productize them.

Instead of value shift and reflection, we have market responses, which only drive us further apart.

Compassion and care have been systematically stolen from us, only to be repackaged as a commodity.

And yet, the global drive towards AI companions is an implicit admission of how deprived of care and connection we are.

In an awkward, roundabout, and sometimes tragic way, it shows that we're craving to center it.

To re-learn how to value it.

This call for change may be unconscious.

Veiled beneath pursuit of profit. A simple demand of capital.

Presented as novelty, entertainment. A way to pass the time.

One could venture to say this is an unconscious call for a matriarchal economy!

This call may be misplaced, fragmented, dispersed.

But it is undeniable.

It's global.

And it's only growing stronger.

Still reading? Subscribe to my Substack to get articles like this delivered directly to your inbox.